The Short Answer: Data interoperability is the ability of different systems to exchange data and use it in a meaningful way, without manual workarounds or custom fixes. It goes beyond moving information from point A to point B. Interoperable systems share, interpret, and act on data automatically.

For telecom and broadband providers, operations likely run on a mix of legacy platforms and newer tools: OSS/BSS systems, provisioning engines, field service applications, billing platforms, and reporting dashboards.

Each one generates and consumes important data. When those systems can’t talk to each other, you get data silos, duplicate entries, delayed workflows, and limited visibility across the business. Data interoperability solves that by connecting systems so information flows where it needs to go, in a format each platform can actually use, in real time or close to it.

Below, we’ll walk through what data interoperability looks like in practice, why it matters for operators, and how to start building it into your environment.

What is Data Interoperability?

Data interoperability is a system’s ability to exchange data with other systems and make that data usable on both sides. It means different platforms can share information without someone manually reformatting, re-entering, or translating it along the way.

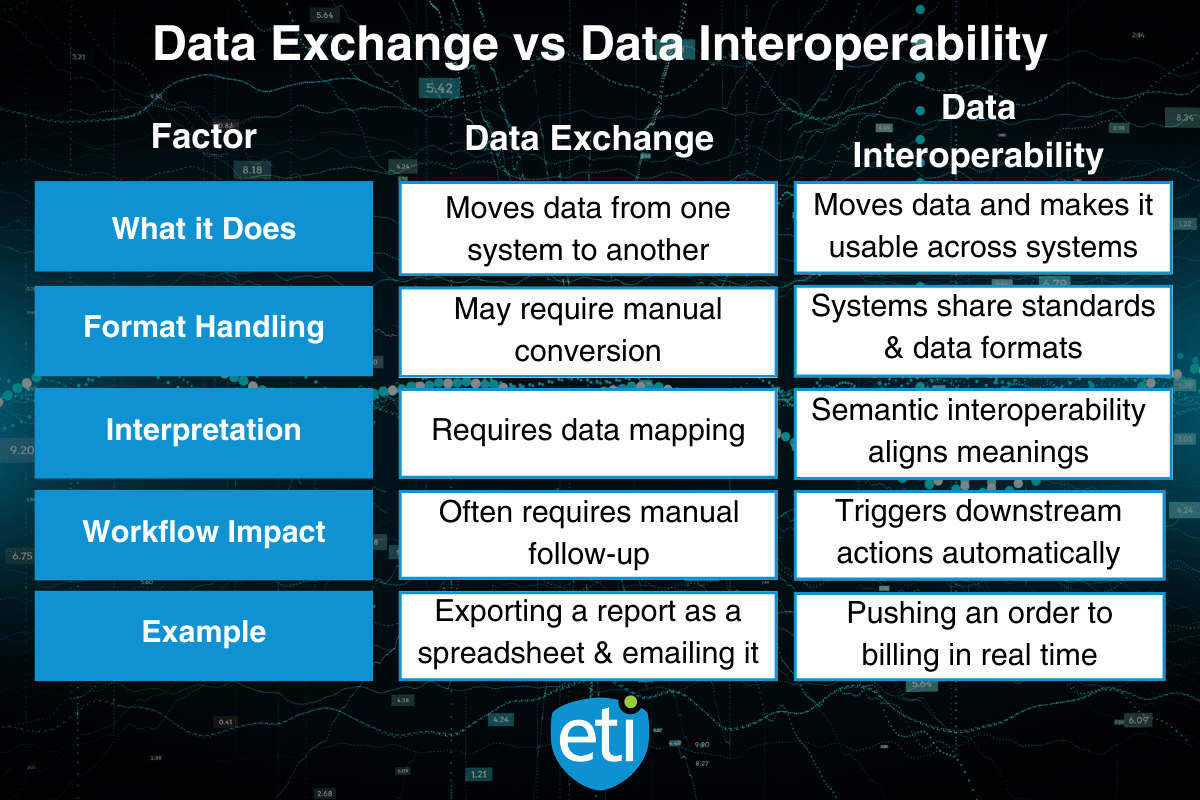

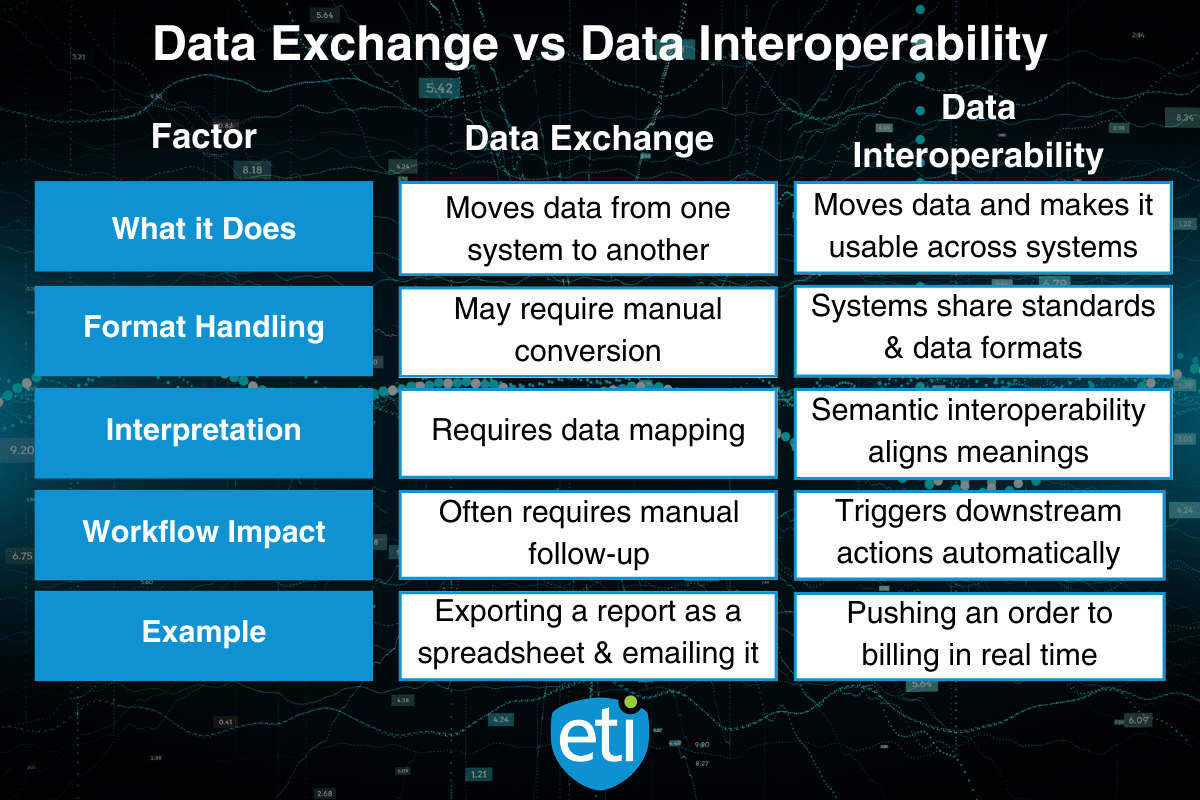

That sounds simple, but there’s a difference between moving data and making it useful. A CSV export that lands in someone’s inbox is data exchange. Data interoperability means the receiving system can read, interpret, and act on that information without extra steps.

Types of Data Interoperability

Not all interoperability works the same way. There are a few levels to understand:

Structural Interoperability

Structural interoperability focuses on data formats. Systems agree on how data is packaged and transported. Think of it as making sure the envelope fits the mailbox. Common standards like XML, JSON, and HL7 handle this layer.

Semantic Interoperability

Semantic interoperability goes a step further. It means both systems understand the meaning behind each data element, not just the format. For example, if one system labels a field “install date” and another calls it “service activation date,” semantic interoperability maps those to the same concept.

Process Interoperability

Process interoperability connects workflows across systems. When a work order closes in one platform and automatically triggers an update in your billing system, that’s process-level interoperability in action.

Most operators need all three working together. Format alignment alone won’t help if your systems interpret the same data element differently.

Data Exchange vs. Data Interoperability

These two terms get used interchangeably, but they aren’t the same thing.

An interoperability framework ties these layers together with a shared set of open standards, application programming interfaces (APIs), and agreed-upon data formats. Industry standards like TMF APIs in telecom or FHIR in healthcare exist specifically to give different systems a common language.

The goal isn’t just to share data. It’s to make sure every system that touches that data can do something useful with it.

Why Data Interoperability Matters

For Telecom and Broadband Providers

Telecom operators run on layered systems. Provisioning, billing, field service, network monitoring, and CRM all handle different pieces of the operation. The problem starts when those systems don’t share data cleanly.

Without data interoperability, your team fills the gaps manually.

A technician finishes a job in the field, but the billing system doesn’t know until someone updates it. A service activation completes in one platform while the customer-facing portal still shows “pending.” These disconnects slow down workflows, create errors, and limit visibility across the business.

With interoperable systems, that manual work goes away.

Data flows between platforms automatically through APIs and shared data formats. A completed work order updates inventory, billing, and CRM without anyone re-keying information. Field service teams see real-time scheduling changes. Provisioning pushes status updates across every connected system.

The operational payoff is straightforward:

- Fewer manual handoffs between systems, which means fewer errors and less duplicate entry

- Faster service activation because provisioning data moves directly to downstream platforms

- Better visibility across departments: dispatch, billing, and network ops all work from the same data

- Reduced time to resolve issues when field teams and back-office systems stay in sync

For operators managing a mix of legacy and next-gen infrastructure, data interoperability is what keeps those different systems working as one operation instead of five disconnected ones.

ETI’s Intelegrate Connect is built to connect the systems telecom providers already use. If your team is spending time on manual handoffs between platforms, see how ETI can help close those gaps.

Interoperability in Action Across Other Industries

Data interoperability isn’t unique to telecom. The same principles show up wherever different systems need to share and use data across organizational boundaries.

Artificial Intelligence and Data Interoperability

AI systems are only as good as the data they can access. Data interoperability gives AI tools clean, connected data across multiple data sources. For telecom operators, features like predictive maintenance and intelligent dispatching perform better when underlying systems can actually share data.

GIS and Spatial Data

Esri’s ArcGIS Data Interoperability extension and ArcGIS Pro use spatial ETL tools to translate between hundreds of data formats, while Safe Software’s FME technology and the FME Workbench application let teams automate data transformation across multiple data sources.

For telecom operators, this has a direct application. ETI’s Intelegrate platform integrates with Esri’s geospatial environment to display real-time device telemetry on ArcGIS maps, syncing device adds, moves, and changes with spatial data. That kind of interoperability connection between operational systems and GIS platforms gives NOC teams geographic visibility into outages, device performance, and network assets without toggling between disconnected tools.

Healthcare and Public Health

Interoperability connects electronic health records, lab systems, and clinical data platforms so providers can access a complete patient picture at the point of care. Standards like Fast Healthcare Interoperability Resources (FHIR) give health information technology systems a shared language for exchanging patient data. The Centers for Medicare and Medicaid Services have pushed interoperability requirements specifically to reduce data silos in the health system.

Government and Human Services

Interoperability frameworks help agencies share data across programs, from public health reporting to cross border payments, while maintaining data privacy. Standardized data elements and common formats let a service provider in one department access relevant records from another without rebuilding integrations from scratch.

Key Components of an Interoperable System

Building data interoperability into your operation doesn’t happen with a single tool or a one-time project. It takes a combination of standards, technology, and data governance working together.

1. Common Standards and Industry Standards

Interoperable systems need a shared set of rules for how data is structured and exchanged. Open standards like JSON, XML, and RESTful APIs give different systems a common language. Without agreed-upon standards, every integration becomes a custom build.

2. Application Programming Interfaces (APIs)

APIs are the connectors that let systems talk to each other. They allow one platform to request, send, or update data in another platform without manual intervention. A well-documented API strategy is one of the fastest ways to break down data silos between legacy and modern systems.

3. Data Quality and Governance

Interoperability only works if the data moving between systems is accurate and consistent. That means clear ownership of data sources, validation rules to catch errors before they spread, and documented standards for how each data element is defined. Bad data moving faster across systems just creates problems faster.

4. Consistent Data Formats

If one system stores dates as MM/DD/YYYY and another uses YYYY-MM-DD, even a simple data exchange can break. Standardizing data formats across platforms reduces transformation work and keeps information usable as it moves between systems.

5. Data Portability

Your data shouldn’t be locked inside a single vendor’s platform. Data portability means you can move information between systems, tools, or service providers without losing structure or meaning. This is especially important for operators evaluating new platforms or consolidating tools over time.

6. Interoperability Connection Points

Every place where two systems meet is a connection point. Mapping these out across your operation helps identify where data gets stuck, where manual workarounds exist, and where an API or integration layer would have the most impact.

These components don’t work in isolation. The value comes from layering them together so your systems can exchange data reliably, interpret it correctly, and trigger the right downstream actions.

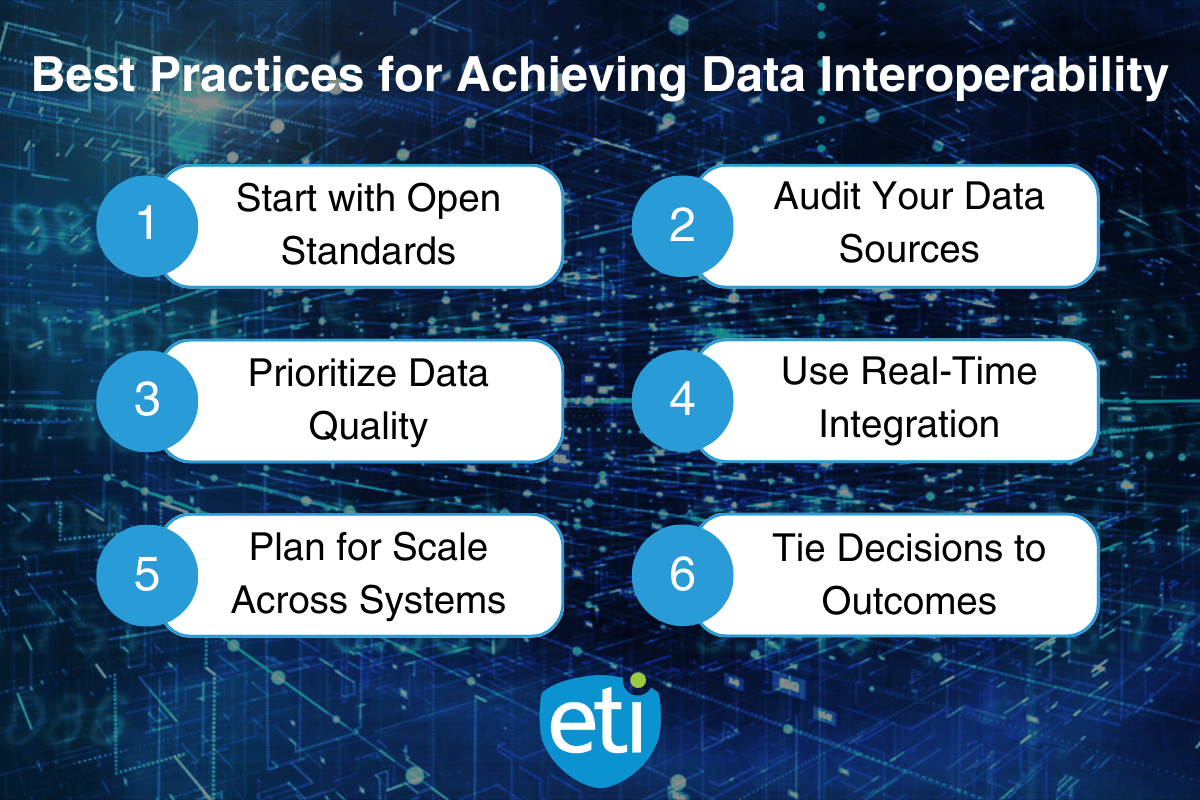

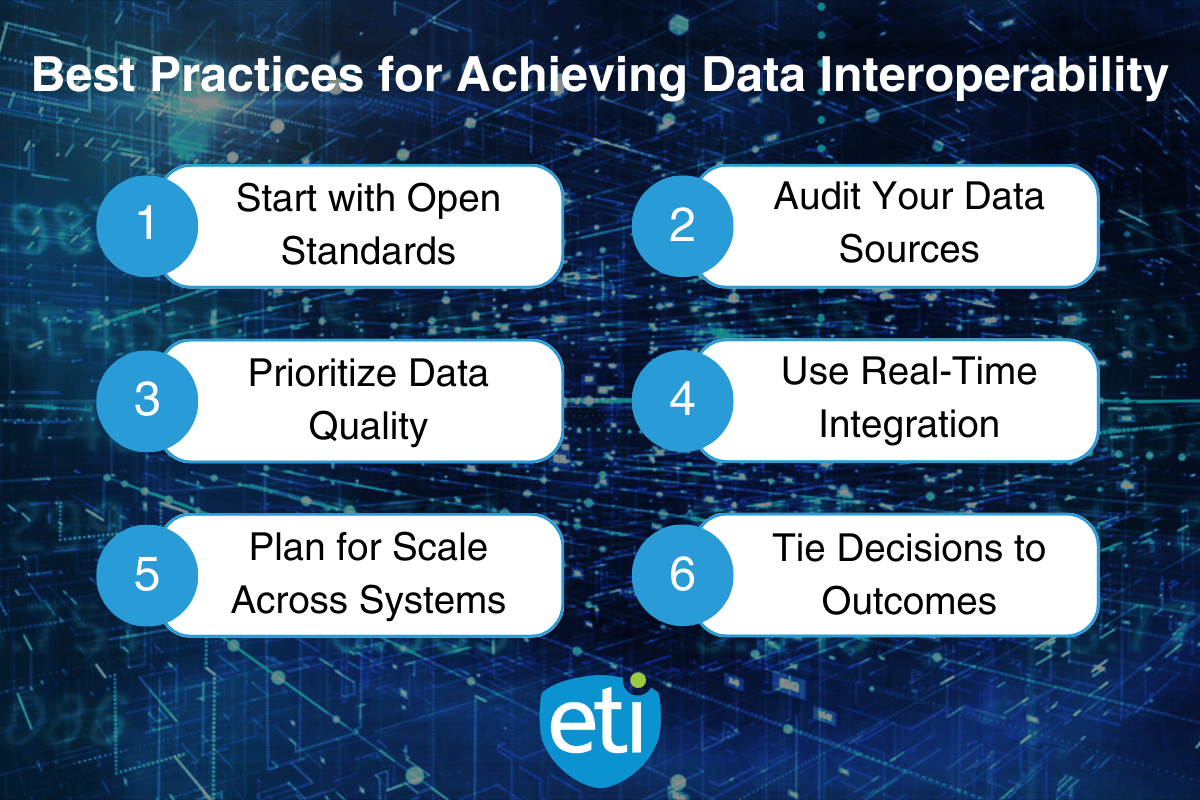

Best Practices for Achieving Data Interoperability

Getting to full data interoperability takes time, but you don’t have to overhaul everything at once. These best practices give your team a starting point that builds toward long-term results.

Start with Open Standards

Adopting open standards early reduces the cost and complexity of every integration that follows. When your systems speak the same language from the start, connecting new tools or replacing legacy platforms gets significantly easier. Look for vendors and platforms that support widely adopted industry standards rather than proprietary formats.

Audit Your Data Sources and Eliminate Silos

Before you can connect systems, you need to know where your data lives. Map out every data source across your operation, including the ones people forget about, like spreadsheets, shared drives, or one-off reporting tools. Identify where data silos exist and prioritize the connections that would have the biggest operational impact.

Prioritize Data Quality from the Start

Interoperability moves data faster. If that data is incomplete or inconsistent, you’re just scaling the problem. Set validation rules, define each data element clearly, and assign ownership so there’s accountability for what goes into and comes out of each system.

Use Real-Time Integration Where It Counts

Not every data exchange needs to happen in real time, but some do. Provisioning updates, field service scheduling, and customer-facing status changes all benefit from real-time data sharing. Focus your real-time integration efforts on the workflows where delays cause the most friction.

Plan for Scale Across Different Systems

Your tech stack will change over time. New platforms, new vendors, new service providers. Build your interoperability framework with that in mind. API-first architectures and modular integration layers make it easier to add or swap systems without rebuilding connections from scratch.

Tie Every Decision to an Operational Outcome

Each of these best practices should connect back to a measurable result: faster deployment, fewer errors, better scheduling, improved visibility, or reduced downtime. If an integration project can’t point to a specific workflow improvement, it’s worth asking whether it belongs on the priority list.

Where to Start with Data Interoperability

Data interoperability is how operators turn disconnected systems into a connected operation. It’s the difference between manually moving data between platforms and having that data flow where it needs to go, in a format every system can use.

The building blocks are straightforward: open standards, clean data, well-mapped connection points, and APIs that let your platforms communicate without custom workarounds. The payoff shows up in faster service activation, fewer manual handoffs, better visibility across departments, and more confident data-driven decisions.

You don’t need to solve it all at once. Start with the workflows where disconnected data causes the most friction, build from there, and keep every integration tied to a real operational outcome.

Want to see how this fits your environment? Contact ETI or Get a Demo to walk through your workflow and integration needs.

Frequently Asked Questions

What is data interoperability?

Data interoperability is the ability of different systems to exchange data and use it in a meaningful way without manual reformatting or re-entry. It means platforms can share, interpret, and act on data automatically.

What is the difference between data exchange and data interoperability?

Data exchange moves information from one system to another. Data interoperability goes further by making sure the receiving system can read, interpret, and act on that data without extra steps or manual conversion.

Why does data interoperability matter for telecom providers?

Telecom operators rely on multiple systems for provisioning, billing, field service, and CRM. Without data interoperability, teams fill the gaps manually. Interoperable systems reduce handoffs, speed up service activation, and give teams better visibility across the operation.

What are the main types of data interoperability?

The three main types are structural interoperability (shared data formats), semantic interoperability (shared meaning behind data elements), and process interoperability (connected workflows across systems).

How do you start building data interoperability?

Start by adopting open standards, auditing your data sources, and identifying where data silos cause the most friction. Prioritize data quality and focus real-time integration on the workflows where delays have the biggest operational impact.

© 2026 Enhanced Telecommunications.